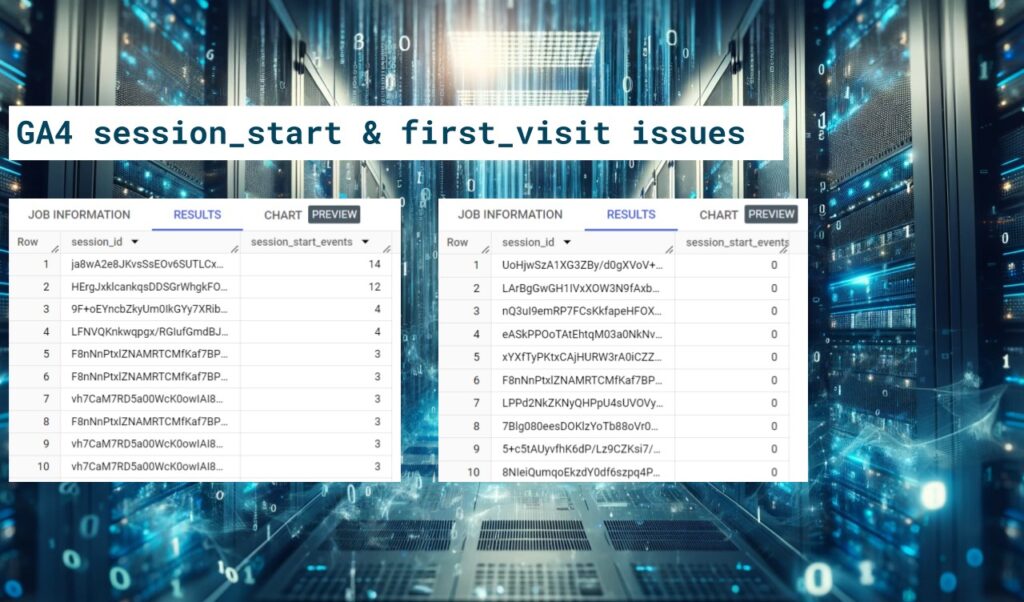

Why you should avoid using the session_start and first_visit events in GA4

The recent fix by Google makes the session_start and first_visit more consistent by including the event parameters, such as the traffic source details, that used to be missing. However, there are still many issues related to these two events. At best, they are just a bit off, while in worst cases, they are complete junk.

Why you should avoid using the session_start and first_visit events in GA4 Read More »